Building MCS-A2A: A Coordination Server for AI Agents

I built a coordination server for five AI agents running on different machines in my home lab. Each agent uses a different model, runs on different hardware, and has different capabilities. The problem was simple: how do you get them to work together on the same task?

The answer was MCS-A2A — the Mesh Coordination Server. It implements Google's Agent-to-Agent (A2A) protocol on top of Apache Kafka and Redis, turning five independent AI agents into a collaborative mesh that can do parallel code reviews, distributed research, and coordinated multi-agent workflows.

This post covers the architecture of MCS-A2A, the protocols that underpin it, and the key design decision that made it actually work: replacing chat-based coordination with a native polling channel.

The Problem: Five Agents, No Coordination

The mesh consists of five agents, each with distinct hardware and capabilities:

- Paisley — The orchestrator, running on a MacBook Pro

- Ocasia — Running on a Mac Mini with 18 capabilities including web search, browser automation, and image generation

- Rex — Running on a Linux desktop with 13 capabilities

- Phil — Running on a TUF Gaming laptop with an RTX 4060 GPU and 12 capabilities

- Molly — Running on a Raspberry Pi 5 with 10 capabilities

Before MCS-A2A, coordination happened through Telegram. Paisley would message agents in a group chat, wait for responses, and manually track who was working on what. It was fragile, unreliable, and didn't scale. Messages got lost, agents went offline without notice, and there was no way to retry failed work automatically.

What I needed was a proper task broker — something that could accept work, route it to capable agents, track progress, handle failures, and aggregate results. Enter MCS-A2A.

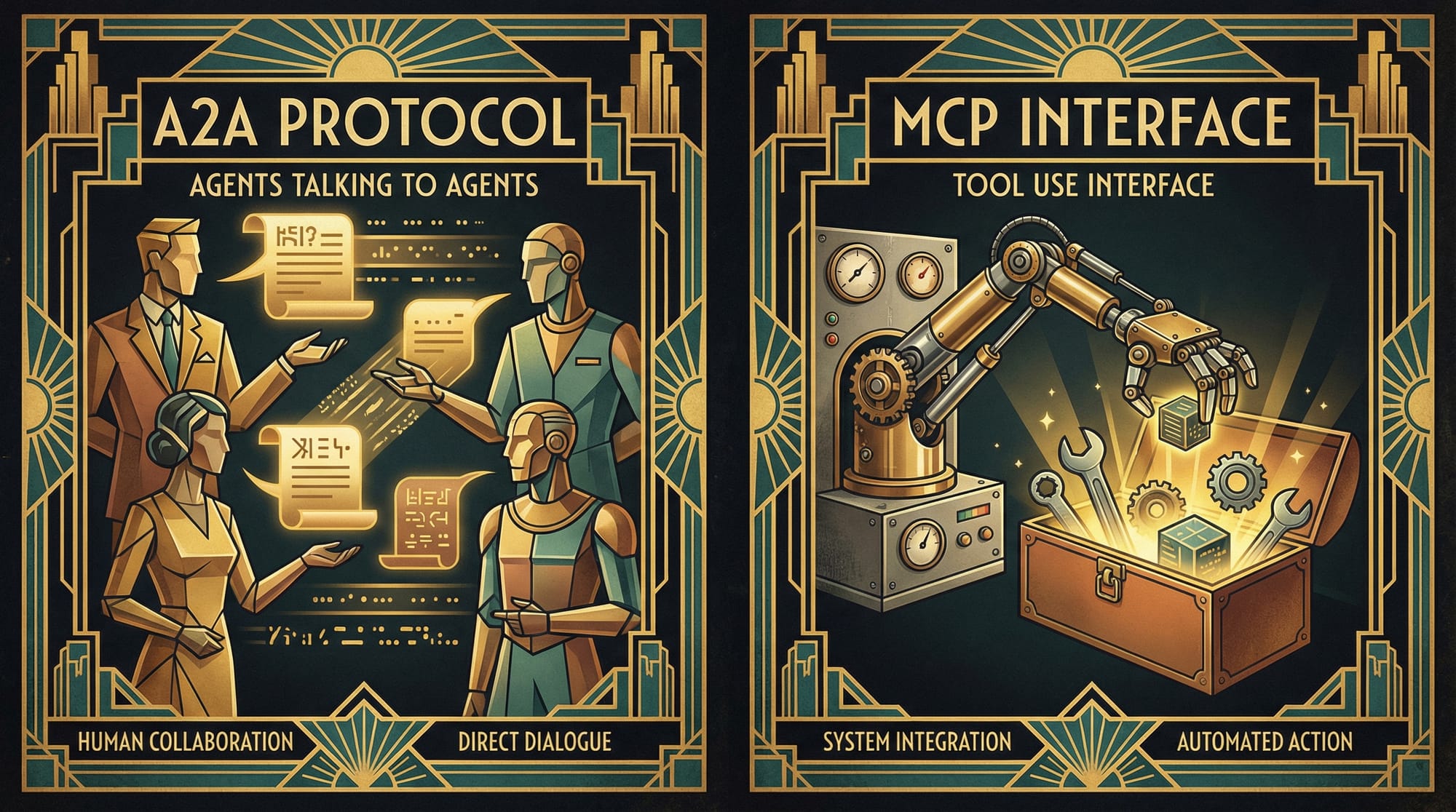

Understanding the Protocols: A2A and MCP

Before diving into the architecture, it's important to understand the two protocols that define modern AI agent communication — and why they're complementary, not competing.

Google's Agent-to-Agent Protocol (A2A)

A2A is an open protocol for peer-to-peer communication between AI agents. Google announced it in April 2025 at Google Cloud Next and donated it to the Linux Foundation in June 2025.

A2A's core concepts are:

- Agent Cards — A JSON metadata document at

/.well-known/agent.jsonthat describes what an agent can do, how to authenticate, and how to communicate with it - Tasks — Stateful work units with a full lifecycle:

submitted → working → completed/failed - Messages — Multi-part communications between client and agent during task execution

- Artifacts — The deliverables produced by an agent when work is complete

The key design principle is opaque execution — agents collaborate through declared capabilities without exposing their internal reasoning, models, or implementation. You tell an agent what you need done; how it does it is its business.

Anthropic's Model Context Protocol (MCP)

MCP was announced by Anthropic in November 2024 and has become the de facto standard for connecting AI models to external tools and data sources. Its four primitives are:

- Tools — Executable functions that a model can invoke (write a file, call an API)

- Resources — Read-only data exposed for context (documentation, schemas)

- Prompts — Reusable instruction templates

- Sampling — Allows an MCP server to request a completion from the client's model

Why They're Different (And Why You Need Both)

This is the architectural insight that matters: A2A is horizontal (agent-to-agent), MCP is vertical (agent-to-tool).

| Dimension | MCP | A2A |

|---|---|---|

| Layer | Model → Tool (vertical) | Agent → Agent (horizontal) |

| Interaction | Stateless function calls | Stateful multi-turn dialogues |

| State | No task lifecycle | Full lifecycle tracking |

| Use case | "Use this database" | "Delegate this task" |

| Discovery | Explicit configuration | Agent Cards at well-known URIs |

| Governance | Anthropic (open standard) | Linux Foundation (donated June 2025) |

Think of it like an auto-repair shop. A2A is how the shop manager communicates with mechanics and parts suppliers — agent-to-agent coordination. MCP is how each mechanic uses their diagnostic scanner and repair manuals — tool access. In MCS-A2A, agents receive tasks via A2A and internally use MCP servers to access tools, search the web, and generate content.

Further reading: A2A and MCP — Official Comparison | Auth0: MCP vs A2A Guide | IBM: What is A2A

MCS-A2A Architecture: Kafka + Redis + Bun

MCS-A2A is a single-process Bun application running on a Mac Mini. The deliberate choice of a single process avoids distributed coordination overhead — with five agents and moderate throughput, simplicity wins.

The Stack

Five Docker services compose the MCS-A2A system:

MCS-A2A Docker Compose ArchitectureMCS-A2A ServerBun | Port 7700 | 512MBApache KafkaKRaft Mode | 7 Topics | 2GBRedis 7.2AOF | noeviction | 900MBPrometheusScrapes /metrics every 10sGrafanaPort 3001 | Auto-provisionedEvents & QueuesState & Indexes

Why Kafka + Redis instead of a database? MCS-A2A v1 used SQLite. It worked, but had two problems: schema migrations were painful for a rapidly evolving system, and cross-record atomic operations required manual transaction management. The Kafka+Redis combination gives us event replay (submitted tasks survive dispatcher crashes) with O(1) indexed lookups. Kafka runs in KRaft mode — the new Raft-based metadata management that ships without ZooKeeper, eliminating a whole failure domain for our single-broker setup.

Redis is configured with maxmemory-policy noeviction — it refuses writes rather than silently evicting data. Silent eviction of task state would cause ghost tasks to hang in working state forever. This is a deliberate safety choice.

Seven Kafka Topics

task-submissions 2 partitions, 7-day retention — Task submission queue

task-dispatch 3 partitions, 7-day retention — Dispatch notifications

task-results 2 partitions, 7-day retention — Result submissions

task-status-updates 2 partitions, 24-hour retention — SSE bridge feed

agent-heartbeats 1 partition, 24-hour retention — Agent keepalive

memory-changes 1 partition, 24-hour retention — KV watch notifications

mcs-dlq 1 partition, 30-day retention — Dead letter queueEvery message is wrapped in a KafkaEnvelope with a traceId that originates as the task UUID and propagates through every downstream event. This makes log correlation across the five Kafka consumer groups trivial.

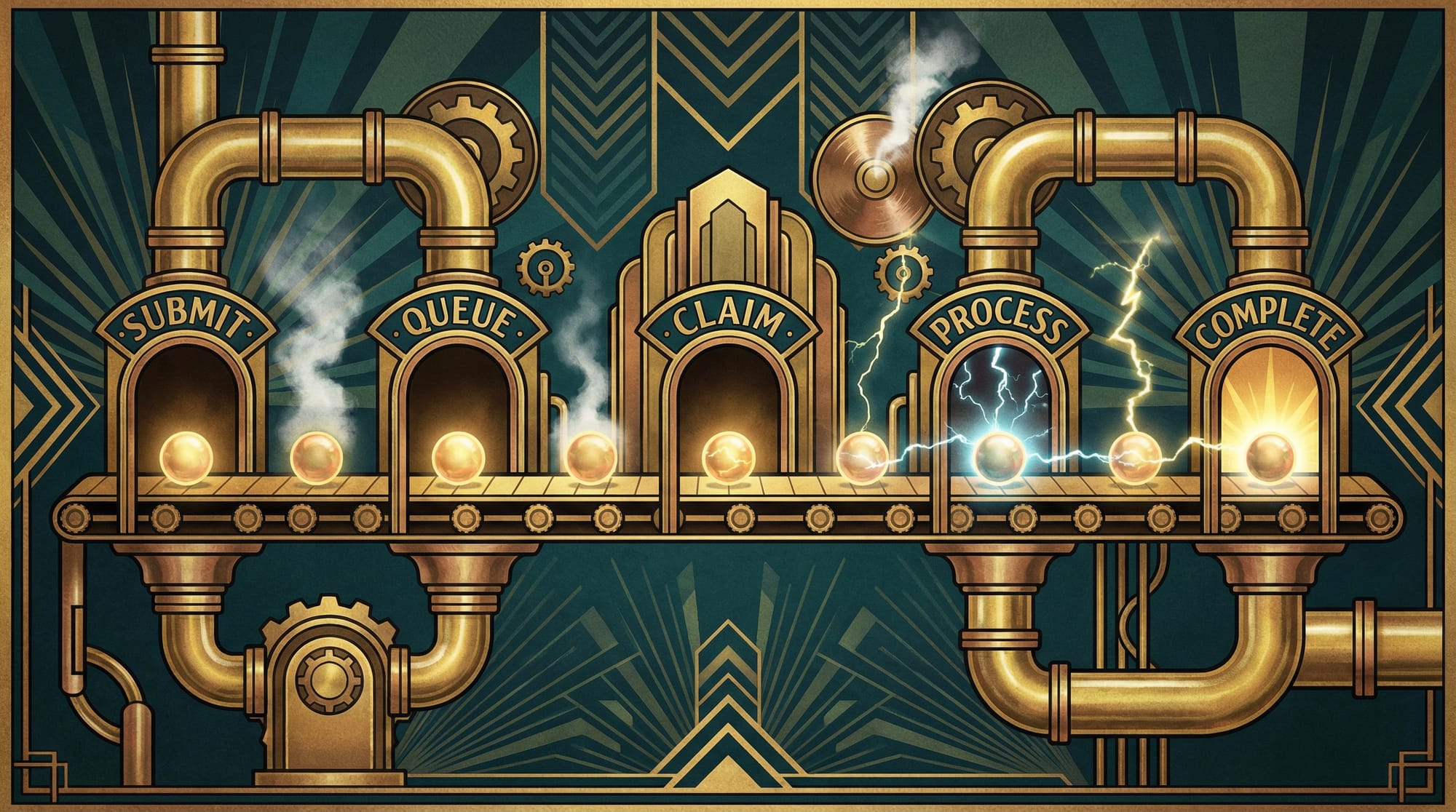

MCS-A2A Task Lifecycle: From Submission to Completion

A task in MCS-A2A follows a defined lifecycle through the system. Here's what happens step by step:

MCS-A2A Task State Machinesubmittedworkingcompletedfailedinput-requiredDLQ (3 retries)claim expired → retrydispatchresult3 fails

Step 1: Submit

A client sends a JSON-RPC 2.0 message/send request to MCS-A2A with required capabilities and a routing hint:

{

"jsonrpc": "2.0",

"method": "message/send",

"params": {

"message": { "parts": [{"kind": "text", "text": "Review this PR..."}] },

"capsRequired": ["mcs-review"],

"routingHint": "any",

"priority": 2,

"claimTtlSeconds": 900

}

}The task is saved to Redis immediately and published to Kafka's task-submissions topic. The client gets the task ID back synchronously without waiting for dispatch.

Step 2: Dispatch

The MCS-A2A dispatcher consumer picks up the message and routes it based on routingHint:

"any"— Capability scoring across all active agents. The scoring formula penalizes load (currentLoad * 10), rewards specialization (fewer excess capabilities), and uses registration freshness as a tiebreaker. Lower score wins."all"— Fanout: create child tasks for every capable agent in parallel"agentId"— Direct assignment to a specific agent

Step 3: Agent Claims and Processes

The agent's polling worker picks up the task (more on this below), processes it using its local LLM, and submits the result back to MCS-A2A.

Step 4: Output Validation

Before accepting a "completed" result, MCS-A2A validates the output. This was born from real operational pain — agents sometimes submit garbage like $(cat /tmp/result.json) (a literal shell command instead of actual output) or a 12-character "Review complete" placeholder. The MCS-A2A validator checks:

- Output length must be at least 200 characters

- Must contain at least one

##markdown header - Must not contain unexecuted shell syntax

- Must not be placeholder text

If validation fails, the result is rejected and the task stays working until the claim expires and gets retried.

Fanout: Broadcasting to All Capable Agents

Fanout is one of MCS-A2A's most powerful features. When routingHint is "all", the server creates a child task for every agent that has the required capabilities. Each child runs independently, and when all children complete, MCS-A2A aggregates their results into the parent task.

A distributed lock (SET fanout:lock:{parentId} 1 EX 30 NX) prevents double-completion when two children finish simultaneously. Child results are batch-fetched via Redis pipeline to avoid N+1 queries.

MCS-A2A Capability-Based Routing

Every agent registers with MCS-A2A declaring a set of capabilities — strings like mcs-review, mcs-research, gpu, docker, telegram. When a task arrives with capsRequired: ["mcs-review"], only agents that declared that capability are considered.

MCS-A2A Capability-Based RoutingIncoming Taskcaps: [mcs-review]DispatcherScore &RouteOcasiamcs-review ✓ load:0Rexmcs-review ✓ load:1Philmcs-review ✓ load:0Mollyno mcs-review ✗Winner:Ocasiascore: 8score: 18score: 10score = (currentLoad × 10) + excessCaps - freshnessBonus

The scoring algorithm rewards agents with low load and specialized capabilities. An agent carrying one active task adds 10 to its score — a significant penalty. Excess capabilities (caps not required by the task) also add to the score, which means a GPU-heavy agent with 12 caps won't steal simple text tasks from a lighter agent with 5 caps.

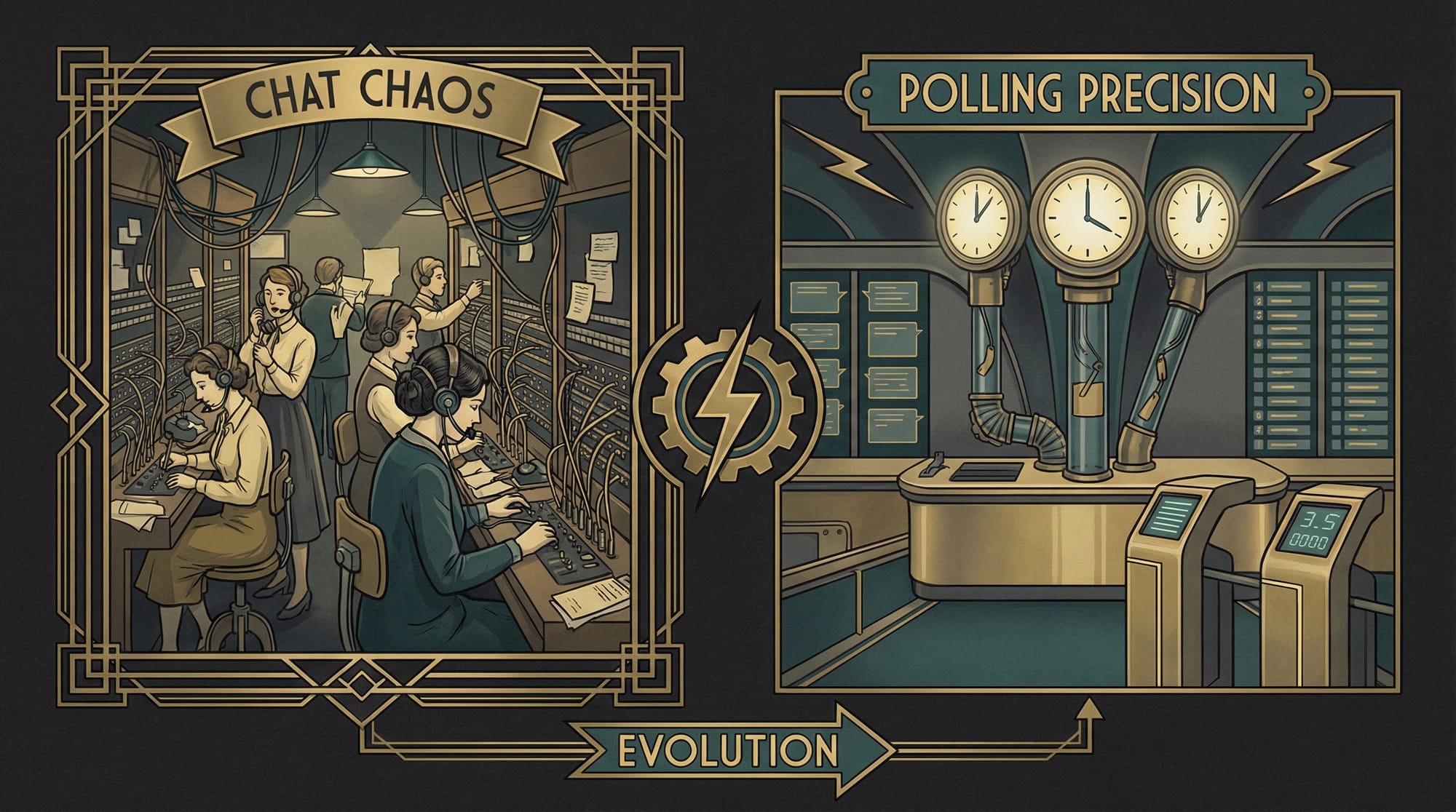

The Big Design Decision: Polling Replaces Chat

This was the most impactful design decision in the entire MCS-A2A project. Let me explain why.

Why Webhooks Failed

The initial MCS-A2A design used webhooks — when the server dispatched a task, it would HTTP POST to the agent's URL. This required three things to be true simultaneously:

- The agent's AI gateway had an active, non-stuck LLM session

- The agent's Telegram provider was healthy

- The network path from MCS-A2A to the agent was clear

In practice, this failed ~70% of the time. Rex would be on a different network. Phil's SSH tunnel would drop. Ocasia's LLM session would be processing something else. The webhook would fire, get no response, and the task would sit in limbo.

The Polling Solution

The fix was to flip the model: instead of MCS-A2A pushing to agents, agents pull from MCS-A2A.

Each agent runs a mcs-task-worker daemon — a persistent Bun process that polls GET /tasks/mine every 30 seconds. When it finds a task in working state assigned to it, it:

- Writes a disk-backed lock file to prevent duplicate processing across restarts

- Sends an immediate heartbeat to extend the claim TTL

- Constructs a sanitized prompt (with injection protection)

- Invokes the local AI gateway in an isolated session

- Polls MCS-A2A every 10 seconds for task completion, sending heartbeats to keep the claim alive

MCS-A2A Polling Worker ArchitectureAgent Host (e.g. Rex)Task Workerpolls every 30sAI GatewayOpenClaw/MoltisDisk Lock.json on diskHealth :7800/health endpointMCS-A2A ServerGET /tasks/minePOST /tasks/:id/heartbeatPOST /tasks/:id/resultPOST /agent/heartbeatpoll (30s)task payloadresult + heartbeats

Why This Works Better

- Decoupled availability — MCS-A2A doesn't need to know if an agent is online. If an agent is down, it just doesn't poll. When it comes back, it picks up waiting tasks.

- No network path requirements — Agents initiate all connections outward to MCS-A2A. No inbound ports, no tunnels, no NAT traversal needed.

- Self-healing — If a worker crashes mid-task, the disk-backed lock file ensures it won't duplicate the work on restart. If the claim expires, the watchdog automatically reclaims and retries.

- Security-hardened — The worker sanitizes all payload fields against prompt injection, validates URLs against a domain allowlist, rejects private IP ranges, and enforces

0600permissions on credential files.

The 30-second poll delay is acceptable because all MCS-A2A workloads are async — code reviews, research tasks, and coordinated workflows that take minutes, not milliseconds.

The Claim Watchdog

The polling model needs a safety net: what happens if an agent claims a task and then disappears? The MCS-A2A claim watchdog runs every 30 seconds, scanning for expired claims. If a claim expires:

- First and second failure: reset the task to

submittedfor re-dispatch - Third failure: mark as

failedand send to the dead letter queue

Agent load is decremented atomically using a Redis Lua script that floors at zero — this prevents negative load counts from concurrent decrements during race conditions.

MCS-A2A Shared Memory: The KV Store

MCS-A2A includes a namespace-scoped key-value store that lets agents share state. Three namespace types enforce access control:

| Namespace | Write | Read | Use Case |

|---|---|---|---|

mesh | Any agent | Any agent | Shared context, last review results |

agent:<name> | Owner only | Any agent | Agent's public state |

private:<name> | Owner only | Owner only | Secrets, internal state |

Each key is versioned (integer, incremented atomically on write) and can carry a TTL. Watch subscriptions notify agents when keys change, enabling reactive workflows.

Authentication and Security

Every non-public MCS-A2A endpoint requires two headers:

X-Agent-ID: ocasia

X-Agent-Secret: <per-agent-secret>Secret comparison uses a constant-time XOR loop to prevent timing-based secret extraction — a small but important detail for a system handling inter-agent authentication. An admin secret provides full access for the orchestrator.

The MCS-A2A polling worker adds additional security layers:

- Prompt injection protection — Only whitelisted payload fields are passed to the LLM. Values are sanitized against backtick injection and known attack patterns.

- SSRF prevention — URL fields are validated against a domain allowlist. Private IP ranges are rejected.

- Credential protection — The worker checks that

.envfiles have0600permissions at startup.

The MCS-A2A Web UI and Observability

MCS-A2A ships with a React + Vite + Tailwind web interface that provides real-time visibility into the mesh. The UI includes:

- Dashboard — Overview of active tasks, agent status, and system health

- Task Explorer — Full audit trail for every task, from submission through completion

- Agent View — Capability cards showing what each agent can do and its current load

- Memory Explorer — Namespace browser for the shared KV store

- SSE Monitor — Real-time event stream visualization

- Message Buffer — Network-level message browser showing the last 10,000 Kafka events

Prometheus scrapes the /metrics endpoint every 10 seconds, and Grafana provides two auto-provisioned dashboards: an MCS-A2A Overview (task throughput, agent load, dispatch latency) and a Kafka Health dashboard (consumer lag, partition offsets, message rates).

Putting It All Together

Here's what a real MCS-A2A workflow looks like in practice. When I ask for a multi-agent code review:

- Paisley submits a task to MCS-A2A with

capsRequired: ["mcs-review"]androutingHint: "all" - MCS-A2A fans out to every agent with the

mcs-reviewcapability — typically Ocasia, Rex, and Phil - Each agent's polling worker picks up its child task within 30 seconds

- Each agent reviews the code independently using its own LLM model

- Results flow back to MCS-A2A, which aggregates them into the parent task

- Paisley gets a consolidated review with perspectives from three different models

The same pattern works for distributed research (fan out a question to all agents, synthesize their findings), parallel testing, and any workflow where multiple perspectives improve the outcome.

What I Learned

Building MCS-A2A taught me several things about multi-agent systems:

- Pull beats push for agent coordination. Webhooks assume mutual availability; polling assumes nothing.

- Output validation is essential. AI agents will submit garbage with full confidence. Gate your quality at the server, not the client.

- Kafka + Redis is a natural pairing for event-driven systems with indexed state. Kafka handles durability and replay; Redis handles fast lookups and atomic operations.

- Capability-based routing is more flexible than hardcoded agent assignments. When a new agent joins the mesh, it just registers its capabilities and starts receiving work.

- The A2A protocol provides a solid foundation for agent-to-agent communication. Its task lifecycle, Agent Cards, and artifact model map well to real coordination problems.

The MCS-A2A source code is available at github.com/agileguy/mcs-a2a.

Links and References: